Abstract:

MongoDB is a very popular document database that gives you a huge amount of flexibility when it comes to storing data. On the other hand, the traditional relational model is far less flexible in how data is stored - you're limited to columns and rows. Sometimes you want to go from a flexible model like MongoDB to a relational one, that's what this post attempts to explain using Talend/JaspersoftETL (ETL tools).

I do not want to get into the relational vs non-relational model argument in this post - it's only an example if you need to do this for some reason...I'm pro choice :)

I do not want to get into the relational vs non-relational model argument in this post - it's only an example if you need to do this for some reason...I'm pro choice :)

Scenario:

We have a JSON document in MongoDB that looks like this:

{"id": "0001","type": "donut","name": "Cake","ppu": 0.55,"batters": {"batter": [ {"id": "1001","type": "Regular" }, {"id": "1002","type": "Chocolate" }, {"id": "1003","type": "Blueberry" }, {"id": "1004","type": "Devil's Food" } ] },"topping": [ {"id": "5001","type": "None" }, {"id": "5002","type": "Glazed" }, {"id": "5005","type": "Sugar" }, {"id": "5007","type": "Powdered Sugar" }, {"id": "5006","type": "Chocolate with Sprinkles" }, {"id": "5003","type": "Chocolate" }, {"id": "5004","type": "Maple" } ] }

We want to put the document into three different tables, for use in a relational database:

Main Table:

| ID | TYPE | NAME | PPU |

|---|---|---|---|

| 0001 | donut | cake | 0.55 |

Batter Table:

| BATTERID | BATTERTYPE | CAKEID |

|---|---|---|

| 1001 | regular | 0001 |

| 1002 | chocolate | 0001 |

| 1003 | blueberry | 0001 |

| 1004 | devil's food | 0001 |

Topping Table:

| TOPPINGID | TOPPINGTYPE | CAKEID |

|---|---|---|

| 5001 | None | 0001 |

| 5002 | Glazed | 0001 |

| 5005 | Sugar | 0001 |

| 5007 | Powdered Sugar | 0001 |

| 5006 | Chocolate with Sprinkles | 0001 |

| 5003 | Chocolate | 0001 |

| 5004 | Maple | 0001 |

Talend tMongoDBInput Component

The tMongoDBInput component is what we'll use to extract the data from MongoDB and input into Talend. This is fairly straightforward, you can configure the connection and then you will have to map some fields. We map id, type, name, ppu as regular fields. The batter and topping fields are somewhat special.

Toppings:

The toppings field will be mapped a string and will contain an object in each row that we'll need to deal with later

Batters:

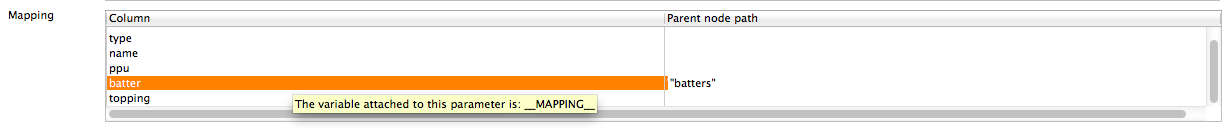

Batters is a nested document within another nested document and we'll need to define a parent node path. tMongoDBInput does not allow you to use node notation to select fields (like batters.batter) so you'll define a parent node like this:

In the end the schema for tMongoDBInput will look like this:

tMap Component - Splitting

Now we'll use a tMap component to split the outputs into three (main, topping, batter)

tExtractJSONFields - Expanding Documents

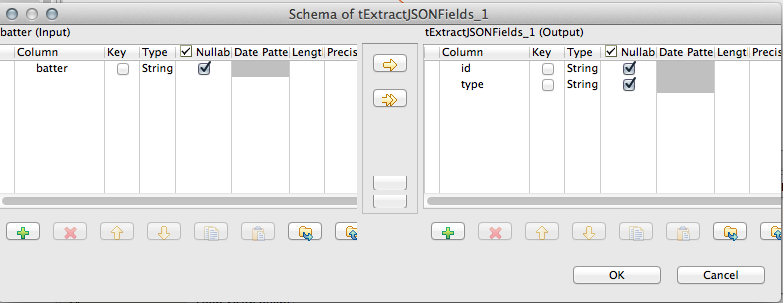

So now we have to deal with the special fields, batter and topping - each one of them contains a list that we want to denormalize into it's own rows. We can use the tExtractJSONFields component for this. The component has a couple of key things: schema, Loop XPath Query and XPath query. This determines what fields to map.

Schema:

Then set the Xpath queries:

And finally glue it all together:

Note that we have 3 input rows, then for each batter and topping we are extracting.

So now we have to deal with the special fields, batter and topping - each one of them contains a list that we want to denormalize into it's own rows. We can use the tExtractJSONFields component for this. The component has a couple of key things: schema, Loop XPath Query and XPath query. This determines what fields to map.

Schema:

Then set the Xpath queries:

And finally glue it all together:

Note that we have 3 input rows, then for each batter and topping we are extracting.

If you are interested in this example, you can download it here:

- Data: This is a JSON object you can import with mongoimport (like mongoimport --jsonArray --collection nestedSample < doc.json)

- JaspersoftETL Job: This job works with JaspersoftETL 5.4.1 Big Data Edition (or presumably the Open Source Big Data edition of Talend)

Recommended Comments

There are no comments to display.